Semantic Parametric Reshaping of Human

Body Models

Yipin Yang, Yao

Yu, Yu Zhou, Sidan Du, James Davis, Ruigang Yang

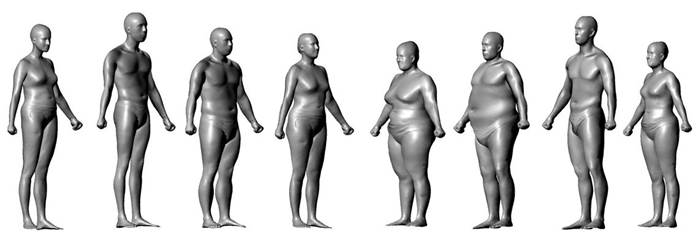

We develop a novel approach to

generate human body models in a variety of shapes and poses via tuning

semantic

parameters. Our approach is

investigated with datasets of up to 3000 scanned body models which have

been

placed

in point to point correspondence.

Correspondence is established by nonrigid deformation of a template

mesh. The

large dataset allows a local model

to be learned robustly, in which individual parts of the human body can

be

accurately reshaped according to

semantic parameters. We evaluate performance on two datasets and find

that our

model outperforms existing

methods.

Citation

If you use this dataset, please cite the

following paper:

Dataset

The dataset contains about 1500 registered

male and female meshes with point-to-point correspondences

respectively.

Each mesh has 12500 vertices and 25000

facets.

The data is derived from the CAESAR dataset. I was given permission to share research results, but not original data. Thus there are no raw scans or weights, heights, etc available with our meshes.

No commercial usage of the data is allowed.

Click here

to obtain a username and password for dataset access.

Other human body data

- SCAPE - (paper website) (data) - The correspondence between the meshes above is identical to that in SCAPE

- FAUST - (website-data-code) - different people in different poses

- BodyLabs - (web) - Company commercializing body model tools

- MPI - (website-data-code) - different people in different poses from a different MPI lab

- MPII Human Shape (website-data-code) - CAESAE dataset processed by MPI

- CAESAR - (website-data) - Sells complete CAESAR dataset with more measurements and authorized to provide commercial license.

- other - (web) - Other data I find around my lab that might be helpful to someone